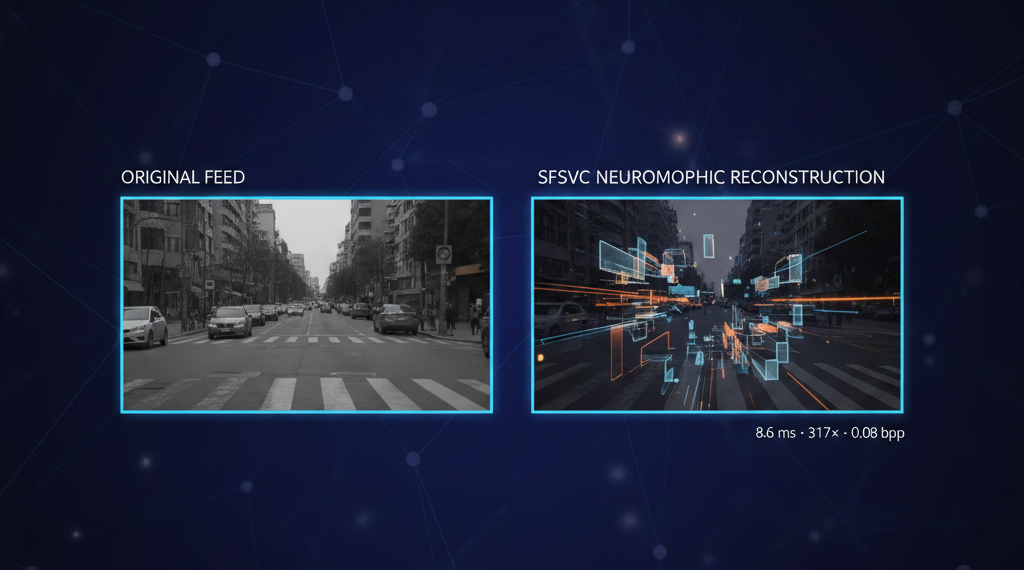

Neuromorphic codec.

Crack details preserved. 94% less bandwidth.

Incoming drone frames are converted into spike-frequency representations that capture structural anomalies — hairline cracks, spalls, delamination — with dramatically reduced data volume. The codec preserves the geometry needed for scoring while discarding perceptually redundant pixels, enabling real-time perception at under 8 W on embedded hardware.